Instance Segmentation

Overview

Instance Segmentation node detects individual object instances and returns both pixel-level segmentation contours and standard object metadata for each instance.

Unlike bounding-box detection, this node provides the precise shape boundary of each detected object. Unlike semantic segmentation, each instance is treated separately — two overlapping objects of the same class produce two distinct contours. Use this node when exact shape boundaries matter, such as for area measurement, masking, or defect inspection.

Input

Input Image

image requiredThe image frame to analyze. Connect this to a camera or upstream image output.

Model Directory Path

string requiredPath to the segmentation model directory. See Model Directory Path for details.

Confidence Threshold

number requiredMinimum confidence score to keep a detected instance. See Confidence Threshold for tuning guidance.

Default: 0.5

Ignore Labels

arrayList of label strings to exclude from the output. Instances with a matching label are discarded even if they meet the confidence threshold.

Overlay Results

boolean requiredWhether to draw filled segmentation masks and bounding boxes on the output frame. See Overlay Results.

Advanced Setting

booleanReveals extra framework and architecture fields for non-.nam models. See Advanced Setting.

Output

Overlay Image

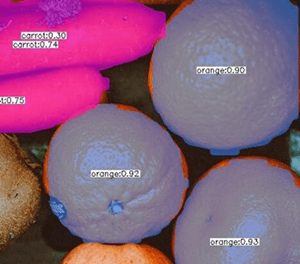

imageOutput frame from the node. If overlays are enabled, each segmented instance is shown with a filled color mask and bounding box.

Detected Count

integerNumber of object instances segmented in the current frame.

Detected Objects

arrayArray of segmentation result objects. Each object contains:

bboxarray: Bounding box[x, y, width, height]of the instance.contourarray: Pixel-level boundary points of the segmented instance as[x, y]pairs.labelstring: Predicted class label.confidencenumber: Detection confidence score.